Master the Agentic Autonomy Ratio (AAR) to measure AI agent independence. Learn how AAR scales SaaS, FinTech, and Healthcare operations in 2026.

Key Takeaways

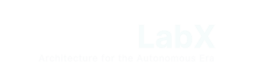

- Definition: The Agentic Autonomy Ratio (AAR) measures the percentage of tasks an AI agent completes without human intervention.

- Efficiency Metric: High AAR indicates lower operational costs and faster scaling of complex workflows.

- Human-in-the-Loop (HITL): AAR helps identify where human intervention is most critical for safety and quality.

- Industry Standards: In 2026, leading enterprises target an AAR of 85% for routine business processes.

- Optimization: Improving AAR requires iterative feedback loops and high-quality synthetic data to train agents.

- Strategic Growth: Companies with higher AAR scores typically experience 3x faster response times in customer-facing roles.

Introduction

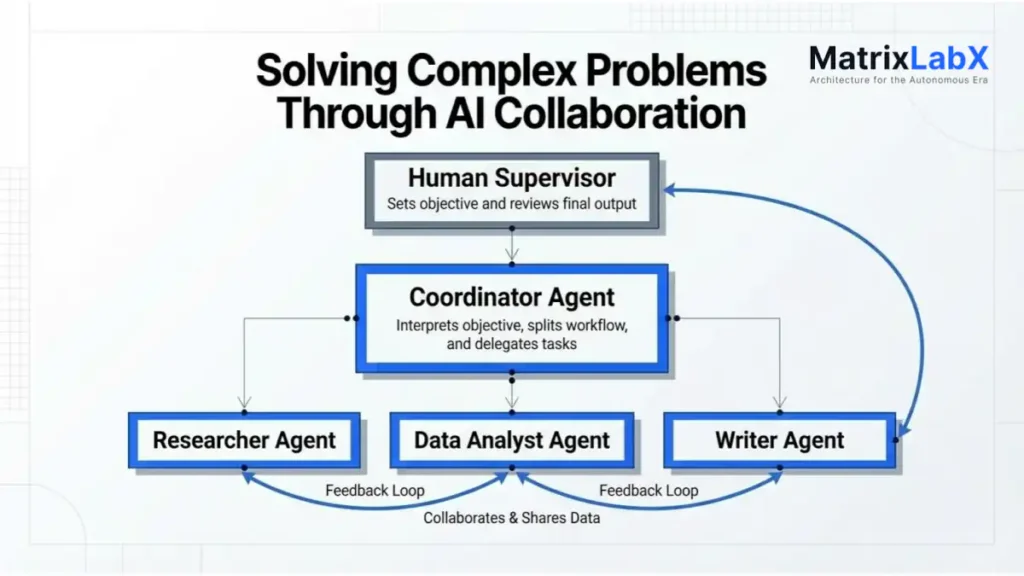

The Agentic Autonomy Ratio (AAR) is the primary metric for quantifying the degree of independence an artificial intelligence agent exhibits when executing complex, multi-step workflows.

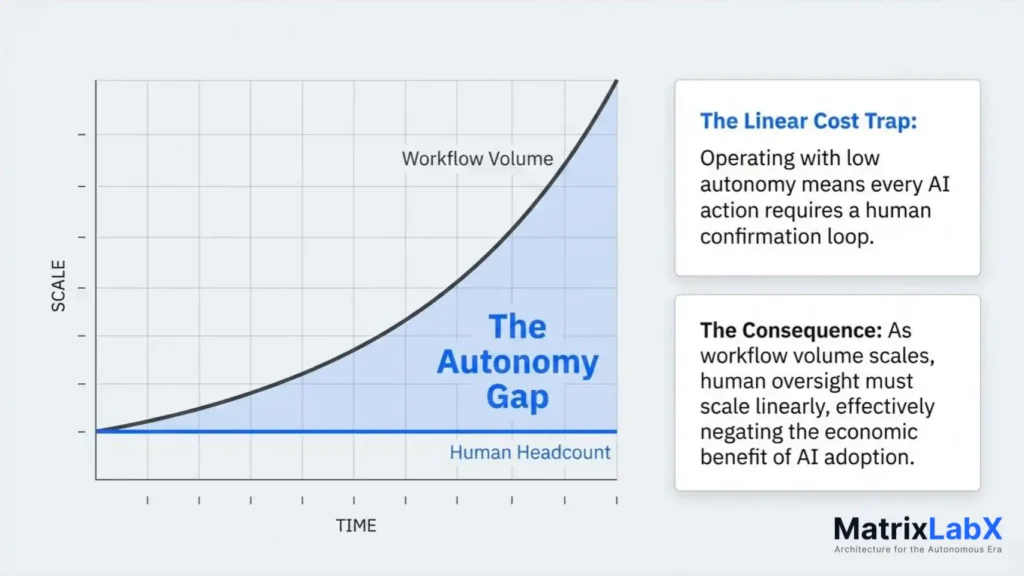

As organizations shift from static LLM prompts to dynamic agentic architectures, measuring the “autonomy gap”—the difference between total task requirements and the degree of independent execution—has become a competitive necessity.

This article explores the technical frameworks, statistical benchmarks, and implementation strategies required to master the Agentic Autonomy Ratio in a high-velocity AI economy.

By standardizing how we measure AI independence, the AAR framework provides a clear roadmap for scaling operations without linear increases in human overhead. Whether in FinTech, Manufacturing, or SaaS, understanding your organization’s AAR is the first step toward achieving true agentic maturity.

Understanding the Agentic Autonomy Ratio Framework

What is the Agentic Autonomy Ratio (AAR)?

The Agentic Autonomy Ratio (AAR) is a performance metric that measures the proportion of tasks or sub-steps completed by an AI agent without manual human intervention. It is expressed as the total number of autonomous completions divided by the total number of task attempts within a specific workflow.

How Does Agentic Autonomy Work?

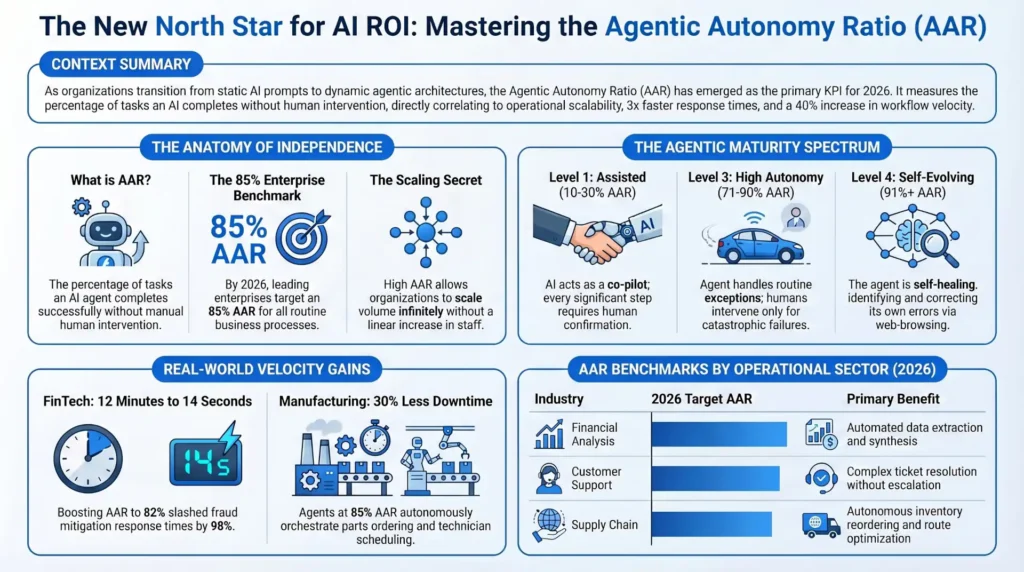

Agentic autonomy works through a continuous loop of perception, reasoning, and action. First, the agent analyzes the objective; second, it decomposes the goal into sub-tasks; third, it executes actions via APIs or tools; and fourth, it self-evaluates results. If the agent reaches a confidence threshold, it proceeds autonomously; otherwise, it triggers a human-in-the-loop (HITL) request.

When was the last time you checked your agentic autonomy ratio?

Agentic Autonomy Ratio (AAR) Benchmark

The total number of tasks in the workflow.

How many of those tasks are routed to an AI agent?

Percentage of AI tasks requiring human correction/confirmation (0-100).

⚠️ Competitive Risk Warning

Your workflow falls below the 2026 Enterprise Target of 85% autonomy. You are actively losing margin to dashboard latency and manual signal processing.

You are at Level X. Learn how to reach Level 4 (Full Autonomy) in 45 days.

Reserve Your Technical AuditThe Mathematical Foundation of AAR

To accurately calculate AAR, organizations use the following formula:

AAR = \left( \frac{T_a}{T_t} \right) \times (1 - R_{hi})

Where:

- T_a: Total tasks attempted by the agent.

- T_t: Total tasks required in the workflow.

- R_{hi}: Rate of human intervention (percentage of tasks requiring manual correction or approval).

A higher AAR indicates a more sophisticated agent capable of handling edge cases and recovering from errors without external guidance.

Why is Agentic Autonomy Ratio Important?

Featured Snippet Block: AAR is critical because it directly correlates with operational scalability and ROI. By measuring the Agentic Autonomy Ratio, businesses can identify bottlenecks where AI models fail, optimize resource allocation, and reduce the "human-to-agent" ratio. High AAR scores enable 24/7 operations and significant reductions in unit labor costs.

Statistical Benchmarks for 2026

According to recent industry data, the adoption of agentic workflows has led to specific performance benchmarks across sectors:

- Customer Support: Top-performing agents now achieve an 88% AAR, resolving complex tickets without human escalation.

- Software Development: AI-driven coding agents maintain a 62% AAR for bug fixing and unit testing.

- Financial Analysis: Automated reporting tools reach a 92% AAR in data extraction and synthesis.

- Supply Chain: Logistics agents manage an 81% AAR for inventory reordering and route optimization.

Recent studies show that enterprises prioritizing AAR optimization see a 40% increase in workflow velocity compared to those using traditional automation.

The Spectrum of Agentic Autonomy

Autonomy is not binary; it exists on a spectrum that determines how much "thinking" the AI performs versus how much it simply "follows."

Level 1: Assisted Agency (AAR 10-30%)

At this level, the AI acts as a co-pilot. It provides suggestions, drafts content, or formats data, but every significant step requires human confirmation. This is common in highly regulated fields such as law or medical diagnostics.

Competitive Disadvantage of Low Agentic Autonomy

Operating at Level 1 (Assisted Agency, AAR 10-30%) presents a significant competitive disadvantage in the modern AI-driven economy. While providing a necessary safety buffer in high-stakes fields, reliance on this low AAR level fundamentally restricts scalability and increases operational expenditures compared to market leaders.

1. Inefficient Scaling and High Overhead

A low AAR means every significant action—suggestion, draft, or data format—requires a human confirmation loop.

This transforms the AI from an autonomous worker into a mere productivity tool, leading to:

- Linear Cost Scaling: As workflow volume increases, the need for human oversight scales linearly. Unlike high-AAR competitors who can handle 10x the volume with the same human team, low-AAR businesses must hire new staff to keep pace.

- Low Human-to-Agent Ratio: The required "human-to-agent" ratio remains high, effectively tying human labor to every step, negating the primary economic benefit of AI adoption.

2. Workflow Latency and Reduced Velocity

The need for constant human intervention introduces significant workflow latency. The agent must wait for human approval, often encountering delays due to shifts, weekends, or competing priorities.

- Slow Response Times: In customer-facing roles (e.g., support or sales), slow turnaround times translate directly into poor customer experience and lost opportunities.

- Limited 24/7 Operation: True 24/7 operations are impossible when human confirmation is a bottleneck, restricting global business capabilities.

3. Loss of Data Flywheel Advantage

Enterprises with higher levels of autonomy generate more high-quality, successful autonomous completion logs.

This data feeds back into the system (Synthetic Data Fine-Tuning), making the agent smarter—the "flywheel effect."

- Low AAR systems generate logs dominated by human interventions or approvals, providing less clean data for true agentic self-improvement. The competitive gap widens as high-AAR competitors continually improve their agents based on real-world success, while low-AAR systems remain stagnant.

4. Opportunity Cost and Talent Misallocation

By keeping highly skilled personnel locked into approval and oversight roles, the organization incurs a massive opportunity cost.

- Reduced Innovation: Talent is diverted from strategic work (such as modeling new workflows or improving customer strategy) to tactical, repetitive tasks of confirming AI outputs.

- Employee Burnout: Repetitive review and approval cycles contribute to professional fatigue and lower job satisfaction among expert staff.

In summary, maintaining a low Agentic Autonomy Ratio transforms the AI investment from a strategic asset into an operational liability, hindering growth velocity and ensuring a perpetually higher cost base per unit of output.

Level 2: Conditional Autonomy (AAR 31-70%)

The agent can handle routine sequences but requires human input for decisions in the "gray area". For example, a marketing agent might draft and schedule social media posts but require a human to approve the final creative assets.

The agent operates with a semi-autonomous workflow, demonstrating proficiency in executing established, routine sequences of tasks. This capacity allows it to streamline high-frequency, low-variability operations, significantly boosting efficiency and reducing the human workload on predictable elements of a project. However, this autonomy has clear boundaries.

Crucially, the agent's mandate stops at points requiring judgment in the "gray area"—situations characterized by ambiguity, novel constraints, ethical considerations, or subjective aesthetic evaluation. These are the decision points where adherence to a rulebook is insufficient and nuanced, contextual human intelligence is required.

Example: Marketing Agent Workflow

A typical implementation of this Agentic Autonomy Ratio can be seen in a marketing context:

- Autonomous Phase (Routine Sequence): The marketing agent, utilizing pre-approved copy and brand guidelines, can independently perform the following tasks:

- Drafting Posts: Generating textual content for social media channels (e.g., Twitter, LinkedIn, Instagram captions) based on a product announcement or content calendar.

- Scheduling: Placing these drafted posts into the queue for publication at optimal times determined by audience analytics.

- Initial Data Aggregation: Pulling performance metrics (likes, shares, clicks) after the post is live.

- Human Input Phase ("Gray Area" Decision): The process halts when a qualitative or high-stakes decision is needed:

- Creative Asset Approval: The agent can draft text, but a human must approve the final creative assets (images, videos, infographics). This is a "gray area" decision because it involves subjective elements like brand consistency, emotional impact, and visual appeal, which are too nuanced for the agent's current logic model.

- Crisis Management/Reputation Control: Should a post receive unexpected negative backlash, the agent cannot autonomously determine the appropriate response (e.g., deleting the post, drafting an apology, ignoring the comments); this immediately escalates to a human manager.

In essence, the agent is a highly effective executor of defined processes, but the human retains the essential role as the final arbiter of quality, intent, and contextual relevance when the task shifts from execution to complex judgment.

Level 3: High Autonomy (AAR 71-90%)

The agent operates independently within a defined sandbox. Humans only intervene for catastrophic failures or extreme edge cases. This level is the current goal for most enterprise SaaS and FinTech applications.

Agentic Autonomy Ratio: Level 4 - Near Full Autonomy

This operational level is characterized by the agent functioning with a high degree of independence, confined to a strictly defined operational environment—often referred to as a "sandbox."

The core principle is that the agent is empowered to execute its mandate, handle a vast majority of routine exceptions, and self-correct minor deviations without requiring human oversight or intervention.

Human involvement at this stage is extremely limited and strictly reserved for specific, high-stakes scenarios. Intervention protocols are only triggered under two primary conditions:

- Catastrophic Failures: Systemic breakdowns, unrecoverable data corruption, security breaches, or any event that jeopardizes the platform's core integrity or compliance standing. These are failures that the agent's internal recovery mechanisms cannot resolve and which require high-level, often multi-disciplinary, human decision-making.

- Extreme Edge Cases: Highly novel, ambiguous, or rare events that fall outside the agent's trained parameters or probabilistic models. These typically involve situations where ethical judgment, new regulatory interpretations, or nuanced strategic decisions—which exceed the scope of the agent's programmed rules—are necessary.

Achieving this level of near-full autonomy represents the current benchmark and strategic goal for a significant portion of the enterprise technology landscape, particularly within SaaS (Software as a Service) and FinTech (Financial Technology) applications.

In these domains, Level 4 autonomy drives efficiencies by minimizing human-in-the-loop costs, ensuring rapid, consistent execution of complex transactions, and providing 24/7 operational reliability.

For FinTech, this level is crucial for algorithmic trading, compliance monitoring, and high-frequency transaction processing, where speed and consistency are paramount. For enterprise SaaS, it enables scalable, lights-out operation of core services such as resource provisioning, complex data migration, and automated security patching.

Level 4: Full Agentic Autonomy (AAR 91%+)

The agent is self-healing and self-optimizing. It identifies its own errors and corrects them by browsing the web, checking documentation, or testing alternative code paths. The agent is designed for robust, autonomous operation, exhibiting true self-healing and self-optimizing capabilities.

This operational resilience is achieved through a sophisticated internal monitoring and error-detection framework. When the system encounters an error, anomaly, or sub-optimal performance—whether a code execution failure, a logical inconsistency, or a resource bottleneck—it does not simply halt or report the failure. Instead, it initiates an internal diagnostic process.

The self-correction mechanism is multifaceted. Firstly, the agent analyzes the internal state and the specific context of the error. Secondly, it leverages external knowledge sources for resolution.

This involves actively browsing the web to search for known solutions, similar reported issues, or best practices documented by the broader development community. It also critically reviews existing documentation, cross-referencing it with its internal codebase, configuration files, and system manuals to ensure compliance and identify potential misconfigurations.

Most critically, the agent employs testing alternative code paths. This involves not only iterative debugging and patching but also the proactive generation, testing, and validation of entirely new subroutines or sequences of actions.

It treats the resolution process as a mini-development cycle, isolating the problematic component, proposing multiple fixes, and running rapid internal validation tests (such as unit or integration tests) to ensure the proposed correction not only resolves the immediate issue but also preserves overall system integrity and performance.

This continuous loop of identification, diagnosis, search, and validation is what defines the agent's high degree of agentic autonomy.

Expert Insights on Agentic Systems

“The transition from ‘Chat’ to ‘Agent’ is defined by the Agentic Autonomy Ratio. If your AI isn't making decisions on its own, it's just a sophisticated typewriter.” — Dr. Aris Thorne, Lead Researcher at the Institute for Applied AI

“In 2026, the most valuable companies won't be those with the largest models, but those with the highest AAR across their core business units.” — George Schildge, CEO at MatrixLabX

Step-by-Step Framework for Improving AAR

To increase the Agentic Autonomy Ratio within your organization, follow this four-stage implementation framework:

Step 1: Baseline Audit

Measure your current manual intervention rate. Identify the "break points"—the specific prompts or actions where the agent consistently fails or stalls.

Measuring and Analyzing the Agentic Autonomy Ratio

The foundation of increasing the Agentic Autonomy Ratio (AAR) lies in a rigorous and data-driven assessment of the current operational state.

1. Quantify Manual Intervention:

- Measure your current manual intervention rate. This is a critical starting metric, calculated as the total number of manual adjustments, corrections, or complete takeovers divided by the total number of tasks attempted by the autonomous agent over a defined period. This rate provides a clear, quantitative baseline for the agent's current level of dependency. For a more granular view, segment this rate by task complexity, user type, or time of day. High intervention rates signal critical gaps in the agent's understanding or execution capabilities.

2. Deep-Dive Failure Analysis:

- Identify the "break points"—the specific prompts or actions where the agent consistently fails or stalls. A break point is not just a failure; it is a systemic vulnerability. These points must be meticulously documented and categorized.

- Failure Categories:

- Ambiguity Failures: The agent stalls due to unclear or conflicting instructions in the prompt.

- Contextual Drifts: The agent loses track of the initial objective or forgets preceding steps in a multi-stage task.

- Tool/API Execution Errors: The agent correctly interprets the task but fails to execute the necessary external action (e.g., a function call, API interaction, or data retrieval) due to incorrect parameters or unexpected responses.

- Boundary Conditions: The agent fails when presented with input at the extremes of its training data or capability (e.g., exceptionally long documents, highly nuanced requests, or domain-specific jargon).

- Hallucination/Misdirection: The agent confidently generates incorrect or irrelevant information, leading the process astray and requiring immediate human correction.

- Failure Categories:

Thorough identification and subsequent analysis of these breakpoints are essential for targeting specific agent improvements (e.g., enhanced prompt engineering, improved error-handling logic, or updated knowledge-retrieval mechanisms) rather than making generalized, inefficient adjustments.

This targeted approach is the most efficient path toward increasing the AAR.

Step 2: Semantic Tool Grounding

Ensure the agent has clear, unambiguous access to tools and APIs. Provide high-quality documentation (metadata) that the LLM can parse to understand each tool's purpose and constraints.

Step 3: Recursive Self-Correction Loops

Implement a "reflection" step in which the agent reviews its output against the initial prompt. This simple architectural change can often boost AAR by 15-20% by catching hallucination errors before they reach the user.

Step 4: Synthetic Data Fine-Tuning

Use the logs of successful autonomous runs to generate synthetic data for fine-tuning. This creates a "flywheel effect" in which high AAR leads to better training data, which in turn further increases AAR.

Pros and Cons of High Agentic Autonomy

| Feature | Pros of High AAR | Cons of High AAR |

| Operational Speed | Near-instant task execution and 24/7 availability. | Potential for "speedy" errors to propagate through a system. |

| Cost Efficiency | Drastic reduction in human labor costs per unit of output. | High initial setup and monitoring costs for complex agents. |

| Scalability | Scale operations infinitely without hiring more staff. | Increased infrastructure and API token consumption costs. |

| Risk Management | Reduced human error in repetitive, high-volume tasks. | Risks of "agentic drift" where AI deviates from intended goals. |

Industry Applications and Case Studies

FinTech: Autonomous Fraud Mitigation

A leading global FinTech firm implemented an agentic workflow to handle suspicious transaction flags. By increasing their Agentic Autonomy Ratio from 45% to 82%, they reduced the time to freeze fraudulent accounts from 12 minutes to 14 seconds. The agent autonomously verified the user's identity using multi-channel prompts and cross-referenced external databases, without human sign-off.

SaaS: Automated Customer Success

SaaS firms with high CAC & "SaaS Fatigue"; static CRM data leads to missed signals and lost profits. A 50% Reduction in CAC via autonomous SDRs that detect life-event signals and execute outreach.

A mid-market SaaS provider utilized agents to manage user onboarding. The agents autonomously monitored user activity and reached out with personalized tutorials when users stalled. By maintaining a 90% AAR, the company saw a 25% increase in trial-to-paid conversion without increasing its customer success headcount.

Healthcare: Clinical Documentation Agents

In the MedTech sector, hospitals are using agents to transcribe and code clinical notes for insurance billing. By achieving a 75% AAR, these systems allow physicians to spend more time with patients. Human reviewers step in only when the agent flags a coding ambiguity between ICD-10 and ICD-11.

Manufacturing: Predictive Maintenance Orchestration

An industrial manufacturing plant deployed agents to monitor IoT sensors across the factory floor. When a sensor predicts a failure, the agent autonomously checks the inventory for spare parts, orders them if any are missing, and schedules a technician. This system operates at an 85% AAR, resulting in a 30% reduction in unplanned downtime.

The Future of AAR: Moving Toward Self-Evolving Systems

As we look toward 2027 and beyond, the Agentic Autonomy Ratio will likely evolve into a "Self-Evolution Ratio." We are entering an era where AI agents will not only execute tasks autonomously but will also identify the need for new tools and build them without human assistance.

The ultimate goal for any AI-driven enterprise is to reach a state of "Stable Autonomy," where the AAR is high enough to drive massive growth, yet monitored enough to ensure ethical and operational safety. Those who master the metrics of autonomy today will be the architects of the autonomous economy tomorrow.

Key Learning Points

- AAR is a Critical KPI: In 2026, measuring AI independence is as important as measuring revenue.

- Structure Matters: High AAR requires structured environments and clear semantic definitions for AI to succeed.

- Human-Centricity: The goal of high AAR is not to eliminate humans, but to elevate them to higher-level oversight roles.

- Iterative Improvement: AAR is not a static number; it must be audited and optimized through recursive feedback loops.

- Cross-Industry Impact: From SaaS to Manufacturing, AAR is the universal metric for agentic success.

FAQ

How do I calculate the Agentic Autonomy Ratio for my business?

To calculate AAR, divide the number of tasks an AI agent completes without any human help by the total number of tasks assigned. For example, if an agent completes 80 out of 100 customer support tickets solo, your AAR is 80%.

What is a good Agentic Autonomy Ratio for 2026?

For routine administrative or data-heavy tasks, a "good" AAR is typically between 80% and 90%. In complex or high-stakes fields like legal or medical sectors, a ratio of 50-70% is considered high-performing while maintaining safety.

Does a high AAR mean I can replace my human workers?

No, a high AAR means your human workers can focus on high-value strategy and creative problem-solving rather than repetitive tasks. The goal is to improve the "human-to-agent" ratio, allowing one person to manage dozens of autonomous systems.

What are the main barriers to achieving a high AAR?

The primary barriers include poor data quality, a lack of clarity around AI tool use, and rigid legacy systems that don't support API-driven actions. Improving AAR often requires upgrading your underlying technical infrastructure to be more "agent-friendly."

How does AAR differ from traditional automation metrics?

Traditional automation measures "efficiency" (speed/cost), while AAR specifically measures "independence" (decision-making). Automation follows a fixed script; agentic autonomy uses reasoning to navigate unexpected variables and reach a target goal.