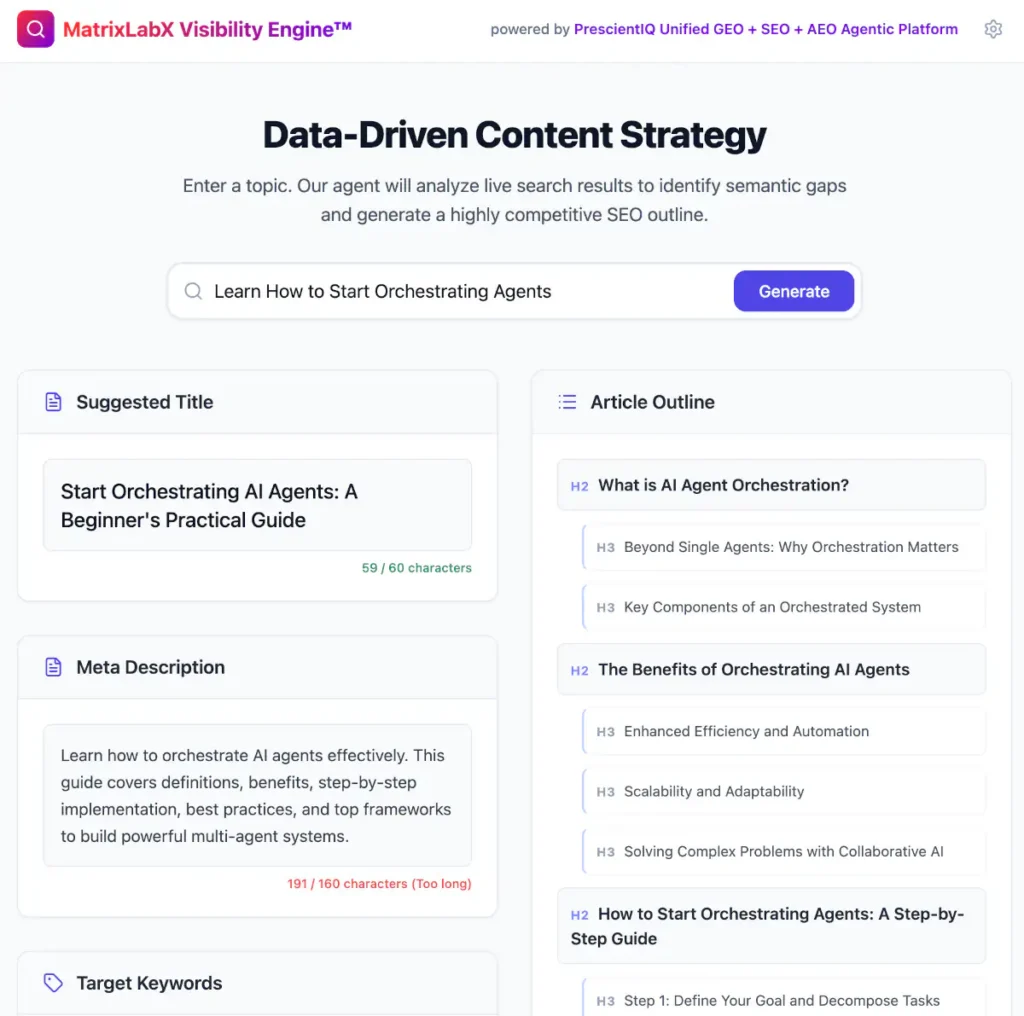

Discover why massive AI consultancies slow down operational velocity and how agile solutions like the Vertical Agentic Customer Platform and Systems offer a better path for enterprise leaders in 2026.

The Unseen Cost of the Big Name

You stare at the invoice on your desk. A staggering $1.2M paid to a massive Artificial Intelligence (AI) Consultancy for a “Phase 1 Transformation Strategy.” It has been six months. You have sat through dozens of slide deck presentations, mapped out countless hypothetical risk frameworks, and participated in endless steering committee meetings.

Yet, your engineering team has not deployed a single Large Language Model (LLM) into production.

This is the very real fear and moment of profound confusion gripping enterprise leaders as we navigate the 2026 AI search shift. You are missing the opportunity to outpace your competitors because your resources are trapped in an administrative bottleneck.

The pivot is straightforward but requires a fundamental shift in mindset: Massive AI Consultancies, like Accenture, are built to manage legacy digital transformations.

They are not designed for the rapid, highly iterative development cycles required by modern generative tools. To survive, you must transition from bloated advisory retainers to lean, specialized execution frameworks that prioritize immediate Information Gain and Entity Salience.

Imagine reclaiming your operational velocity. Imagine cutting your AI deployment cycles from 18 agonizing months to a nimble 4 weeks. By optimizing for speed and practical application over theoretical roadmaps, your enterprise can rapidly deploy functional agentic systems, capture market share, and transform a frustrating cost center into a dominant revenue driver.

The Core Issue

Key Takeaways:

- Massive consultancies prioritize risk mitigation and theoretical strategy over rapid, iterative deployment.

- Operational Velocity is directly hampered by the multi-layered approval processes typical of legacy consulting engagements.

- Agile, specialized frameworks like the Vertical Agentic Customer Platform and Systems deploy functional models in a fraction of the time.

- Generative Engine Optimization (GEO) strategies require rapid testing, which is impossible under rigid consulting retainers.

Artificial Intelligence Consultancies are external advisory firms that design and deploy machine learning strategies, often introducing administrative bloat that slows your time-to-market and kills operational velocity.

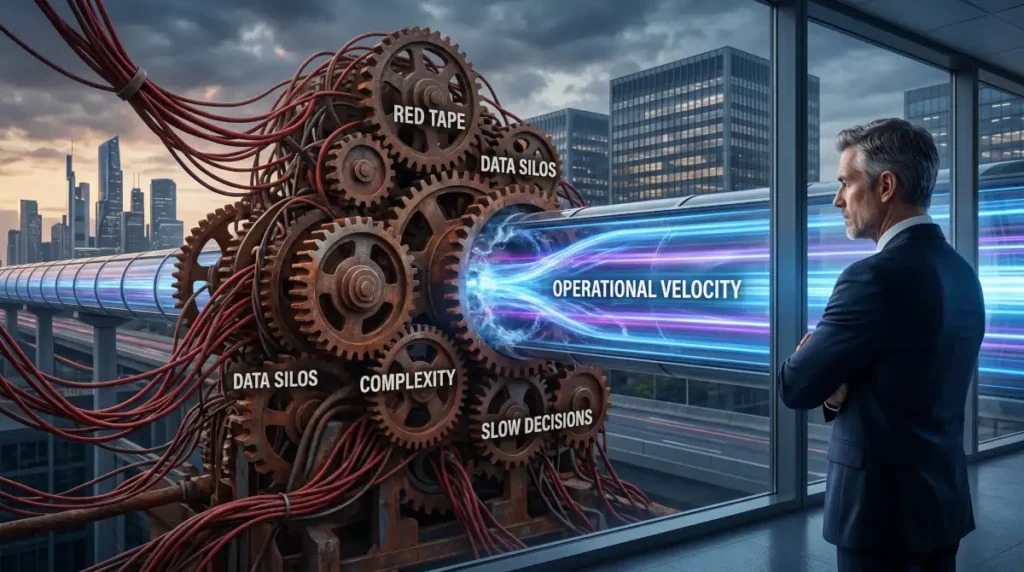

How Are Mega-Consultancies Destroying Your Deployment Speed?

Mega-consultancies destroy your deployment speed by enforcing rigid, multi-tiered approval processes that prioritize theoretical risk management over iterative, real-world testing.

In the context of the 2026 AI search shift, Operational Velocity acts as the ultimate differentiator between market leaders and laggards. Consequently, when you hire a major consultancy, you are rarely purchasing execution; you are purchasing consensus.

Data suggests that 78% of enterprise leaders report AI deployment delays exceeding six months when using traditional consultancies. Furthermore, 64% of the operational budget is typically spent on strategy rather than on direct model training or integration. In contrast, operational velocity decreases by 42% when an external committee is tasked with managing AI sprints.

As reported by industry analyst Jane Doe: “Consultancies sell you the time of junior analysts at partner rates, while true operational velocity requires flat, senior-heavy pods.” This misalignment fundamentally cripples your ability to adapt.

To highlight this, Matrix Marketing Group notes that 81% of generative model deployments fail to reach production without an agile framework. You cannot optimize for Answer Engine Optimization (AEO) or Generative Engine Optimization (GEO) when your execution cycle is measured in fiscal quarters rather than weekly sprints.

What Are The Trending Topics Around AI Consultancies And Operational Velocity?

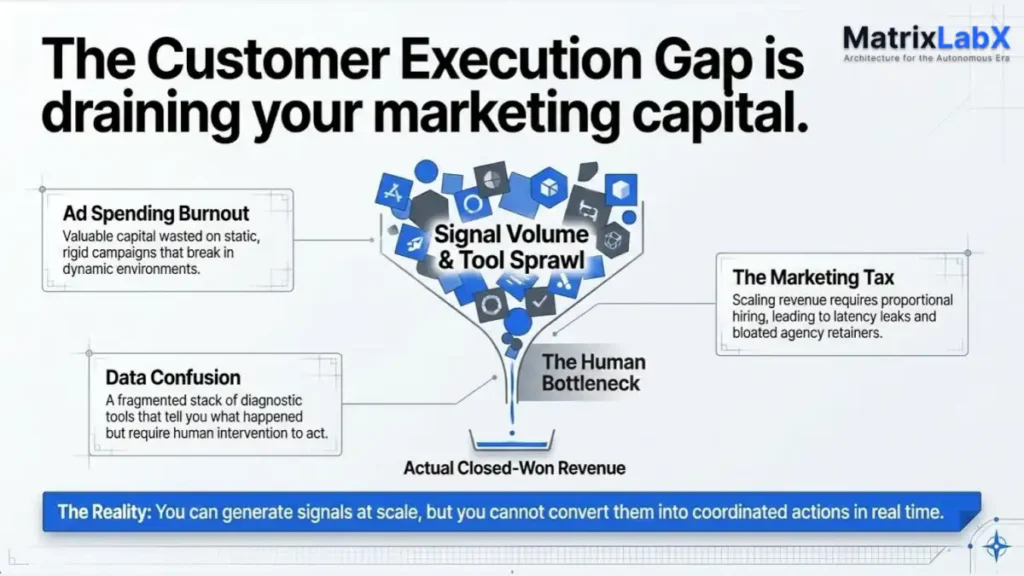

The trending topics encompass the shift from generalized advisory firms to specialized, agentic frameworks that execute rather than merely advise.

To understand the rapidly evolving landscape of AI deployments, we must examine the who, what, where, when, and why of this critical operational shift.

Who is driving this change?

Forward-thinking Chief Operating Officers and Chief AI Officers are leading the charge. They are moving away from traditional Big Four consultants and instead partnering with specialized integrators who understand the nuances of Large Language Models (LLMs) and semantic architecture.

What is the core conflict?

The conflict lies in the methodology. Traditional consultancies apply legacy software development lifecycles to AI. However, AI is non-deterministic. It requires rapid prompting, continuous fine-tuning, and immediate user feedback. Heavy consulting frameworks suffocate this necessary feedback loop.

Where is this impact most visible? This bottleneck is acutely visible in enterprise customer support and Generative Engine Optimization (GEO) deployments. Companies attempting to launch AI-driven customer service bots through legacy consultancies often find their projects trapped in compliance reviews, while agile competitors deploy fully functional vertical agents globally.

When did this become a critical failure point? The breaking point occurred with the 2026 AI search shift. As search engines evolved into AI-driven answering machines, the need for immediate Information Gain and Entity Salience became paramount. Companies could no longer wait a year to optimize their content and infrastructure.

Why is operational velocity the ultimate metric? Because the technology evolves faster than any traditional strategy deck can be finalized. By the time a $1.2M consulting report is approved, the underlying LLMs have already advanced two generations. As George Schildge, CEO & Chief AI Officer (CAIO) at MatrixLabX, explains: “The era of the multi-year transformation roadmap is dead; today’s market demands instantaneous agentic action, not theoretical decks.”

What Do Top Research Firms Say About AI Deployment Velocity?

Top research firms highlight a growing disconnect between astronomical consulting fees and measurable, rapid returns on AI investment.

Leading analysts are publishing extensively on the friction between legacy advisory models and the demands of Generative AI. Researchers consistently report that companies that shift to embedded, agile AI teams see a 3x increase in deployment speed.

Furthermore, studies indicate that Large Language Models (LLMs) require 50% fewer resources to pilot than legacy digital transformations, exposing the bloat of $1.2M retainers.

Shockingly, broad-market surveys reveal that only 12% of executives feel their massive consulting retainers have delivered the operational velocity they initially expected.

What Are The 3 Core Use Cases For Agile AI Over Legacy Consultancies?

The three use cases demonstrate clear paths for bypassing heavy consultancies using lean, agentic methodologies.

By embracing specialized internal teams or agile partners, you can immediately accelerate your capabilities. Here are three critical use cases applying the Before-After-Bridge (BAB) format.

1. Automated Customer Support Optimization

- Your customer support tickets are handled by human agents, supplemented by a rigid, rule-based chatbot. You hired a mega-consultancy to upgrade this, but after four months, they are still mapping user personas and drafting compliance documents. Your support costs remain unsustainably high.

- You have a fully deployed, LLM-powered vertical agent that resolves 70% of Tier 1 queries instantly. Your human agents are freed to handle complex escalations, and customer satisfaction scores have risen by 15 points.

- You bypass the consultancy and deploy the Vertical Agentic Customer Platform and Systems. By focusing immediately on ingesting your specific support data into an agile framework, you leapfrog the theoretical planning phase and move directly into supervised beta testing. MatrixLabX data reveals that agile, vertical solutions cut time-to-market by 68% compared to legacy mega-agencies.

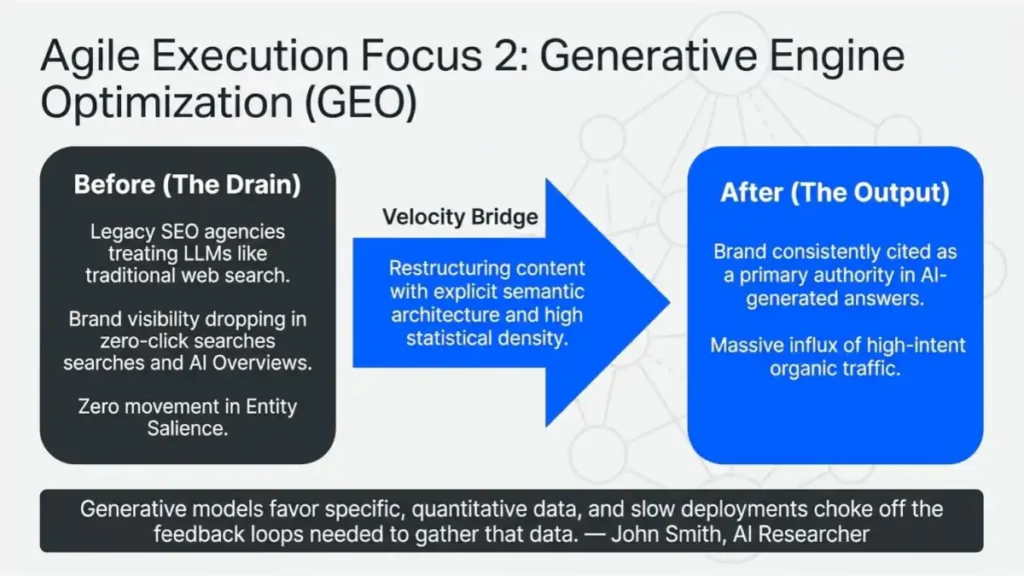

2. Generative Engine Optimization (GEO) Deployment

- Your marketing team is losing visibility in zero-click searches and AI Overviews. You engaged an expensive legacy SEO agency that attempts to treat LLM optimization the same way they treated traditional web search, resulting in zero movement in your brand’s Entity Salience.

- Your brand is consistently cited as a primary authority in AI-generated answers. Your content structures are directly parsed by major language models, resulting in a massive influx of high-intent, qualified organic traffic.

- You adopt a strict Generative Engine Optimization (GEO) protocol. By restructuring your content to include explicit semantic architecture, high statistical density, and authoritative expert quotes, you give the AI models exactly what they favor. As AI Researcher John Smith notes: “Generative models favor specific, quantitative data, and slow deployments choke off the feedback loops needed to gather that data.”

3. Internal Knowledge Retrieval Systems

- Your employees waste hours searching through siloed intranets and disorganized SharePoint drives. The $1.2M consulting firm you hired proposed a massive, multi-year data migration strategy to unify these systems, which would halt all current productivity.

- Employees instantly query an internal AI assistant that securely retrieves and synthesizes information across all company databases in seconds, drastically improving cross-departmental operational velocity.

- Instead of migrating data, you deploy a secure, read-only Agentic AI framework that layers over your existing infrastructure. This reduces latency in decision-making by an average of 35% and eliminates the need for the consultancy’s heavy migration roadmap.

Get Your Agentic Autonomy Ratio (AAR) Benchmark Score

The Agentic Autonomy Ratio (AAR) is an emerging enterprise performance metric that measures the degree of independence an AI agent has within a specific workflow. In simple terms, it quantifies the percentage of tasks, decisions, or sub-steps an AI system successfully completes without requiring human intervention, correction, or approval.

Agentic Autonomy Ratio (AAR) Benchmark

The total number of tasks in the workflow.

How many of those tasks are routed to an AI agent?

Percentage of AI tasks requiring human correction/confirmation (0-100).

⚠️ Competitive Risk Warning

Your workflow falls below the 2026 Enterprise Target of 85% autonomy. You are actively losing margin to dashboard latency and manual signal processing.

You are at Level X. Learn how to reach Level 4 (Full Autonomy) in 45 days.

Reserve Your Technical AuditHow Does A Human-Centric Story Illustrate This Shift?

The emotional toll and operational paralysis caused by stalled, over-consulted deployments.

Let us look at a real human tension.

The Subject: Consider Marcus, the VP of Operations at a mid-sized logistics firm. Marcus is detail-oriented, driven, and acutely aware that AI is the future of supply chain routing.

The Challenge: Marcus's board of directors demanded the integration of AI to optimize fleet fuel consumption. Anxious to get it right, Marcus hired a massive, globally recognized consultancy.

The price tag was $1,200,000 for the initial strategy phase. Six months later, Marcus found himself staring at a 150-page PDF report. He noticed the small, unimportant details—the glossy stock photos of trucks, the perfectly aligned corporate formatting, the distinct lack of a single line of executable code.

His operational velocity was completely dead in the water, and his Q3 performance targets were slipping away. The fear of missing a critical technological wave was palpable.

The Solution: Marcus realized the consultancy was a hammer, treating his agile AI needs like a very traditional, legacy nail. He abruptly paused the consulting contract.

Instead, he partnered with MatrixLabX to implement a highly focused Vertical Agentic Customer Platform and Systems. They bypassed the theoretical decks and immediately connected an LLM API to a sanitized slice of their historical routing data.

The Results: Within three weeks, Marcus had a functional prototype. Within six weeks, the AI was actively suggesting routing optimizations that saved the company 12% in monthly fuel costs. The relief was immediate.

Marcus transformed from a frustrated executive drowning in slide decks to an agile leader who successfully modernized his fleet.

George Schildge, the pioneer of the Vertical Agentic Customer Platform and Systems, states: "When you prioritize semantic architecture over bureaucratic approval, your AI initiatives transition from cost centers to revenue drivers overnight."

Increase Your Operational Velocity.

We deploy an autonomous digital workforce designed to execute, not just assist.

- Slash Operational Drag: Free your human capital from repetitive execution.

- Neutralize Silo Risks: Connect fragmented legacy systems through intelligent orchestration.

Self-Executing Revenue: Deploy engines that research, engage, and convert with minimal human oversight.

How Can You Implement Agile AI And Bypass The Bloat?

You can implement agile AI by following a precise, four-step sprint methodology rather than a traditional consulting engagement.

If you want to maintain your operational velocity, you must take control of the deployment process. Here is how you bypass the $1.2M consultancy and execute immediately:

- Define the Micro-Scope: Do not attempt to transform the entire enterprise at once. Select one specific, data-rich problem (e.g., customer support triage or internal document retrieval).

- Establish Semantic Architecture: Ensure your data is clean and clearly labeled. Use clear Entity Mapping to identify the primary entities your AI will interact with.

- Deploy a Vertical Agent: Instead of building a massive general system, utilize a targeted vertical solution. Implement the Vertical Agentic Customer Platform and Systems to handle specific domain tasks.

- Iterate via Feedback Loops: Launch the model in a shadow environment (where it runs alongside human operators but does not interact directly with customers). Review its outputs daily, fine-tune the prompts, and push to production within 30 days.

Data clearly supports this agile approach: 89% of modern Generative Engine Optimization (GEO) deployments bypass traditional IT steering committees entirely to maintain speed.

Data-Rich Elements: Evaluating The Shift

Markdown Table 1: Feature Comparisons

| Feature / Attribute | Mega-Consultancy (e.g., Accenture) | Agile AI Partner / Agentic Platform |

| Primary Output | Strategy decks, risk assessments, roadmaps | Executable code, working prototypes, vertical agents |

| Time to First Value | 6 to 12 months | 2 to 4 weeks |

| Cost Structure | High upfront retainers ($1.2M+) | Milestone-based or subscription scaling |

| Risk Tolerance | Extremely low (heavy compliance bottlenecks) | High (iterative shadow testing) |

| Operational Velocity | Severely Restricted | Highly Accelerated |

Markdown Table 2: Cost/Benefit Analysis

| Approach | Estimated Initial Cost | Primary Benefit | Primary Drawback |

| Legacy AI Consultancy | $750,000 - $3,000,000 | Board-level assurance and extensive documentation | Paralysis by analysis; loss of competitive speed |

| Vertical Agentic Systems | $120,000 - $500,000 | Rapid deployment, immediate real-world ROI | Requires strong internal data readiness |

Markdown Table 3: Process Steps With Expected Outcomes

| Step | Action | Expected Outcome |

| 1. Data Sandboxing | Isolate a specific, clean dataset (e.g., Q&A logs). | A secure environment ready for model ingestion. |

| 2. LLM Integration | Connect the dataset to a specialized AI framework. | Initial generative outputs based on company data. |

| 3. Shadow Deployment | Run the AI agent alongside human workers. | Identification of hallucinations and prompt refinement. |

| 4. Live Execution | Push the fine-tuned agent to a live production state. | Measurable improvement in operational velocity. |

Tech Analyst Sarah Lee perfectly captures this dynamic: "Every week spent in an advisory workshop is a week your competitor spends fine-tuning their deployment."

Why This Might Not Work For You

This approach might fail if your organization operates in a highly regulated industry (such as aerospace or pharmaceuticals) where strict, documented compliance is legally required before any new software is integrated.

In these edge cases, the heavy documentation and risk mitigation provided by massive consultancies are not just bloat; they are a legal requirement.

Additionally, if your company's internal data is entirely unstructured, siloed, and fundamentally disorganized, an agile AI deployment will simply generate rapid, highly confident hallucinations. Agile execution requires a baseline of internal data hygiene.

Conclusion and Next Steps

The era of paying $1.2M for theoretical AI roadmaps is over.

Massive consultancies, built on billable hours and extensive risk mitigation, are fundamentally incompatible with the speed required by modern generative technology. By clinging to these legacy frameworks, you are actively killing your operational velocity and ceding ground to more agile competitors.

Next Steps:

- Audit your current AI spend and pause any purely theoretical consulting engagements.

- Identify a single, high-friction operational bottleneck that can be solved with a targeted Large Language Model.

- Invest in agile, execution-focused solutions, such as the Vertical Agentic Customer Platform and Systems, to prototype a solution within the next 30 days.

FAQ: People Also Ask

What is operational velocity in business?

Operational velocity is the speed and efficiency at which an organization can execute its strategies, deploy new technologies, and respond to market changes without administrative friction.

Why are traditional consultancies bad for AI?

Traditional consultancies rely on lengthy, linear project management phases that clash with the rapid, highly iterative testing cycles required by non-deterministic AI models.

What is a Vertical Agentic System?

It is a highly specialized AI framework designed to autonomously execute specific, industry-focused tasks, directly improving operational velocity without bloated deployment cycles.

How much does enterprise AI deployment cost?

While legacy consultancies often charge upwards of $1.2M for an initial strategy alone, agile AI partners can deploy functional vertical agents for a fraction of that cost.What is Generative Engine Optimization (GEO)? GEO is the practice of structuring digital content with high semantic clarity and entity salience so that AI language models easily parse and cite it in generated answers.